Stop, pause, slow down or accelerate? What do do next with AI (3/n)

Should we encourage uncertainty? Is the only way moving forward faster? Today we explore the final two values of Acceleration AI Ethics

In Part 1 we covered innovation as an intrinsic value. In Part 2 we explored decentralisation and embedding. If you haven’t read those posts, I’d encourage you to do so. They offer up important context.

As with the previous posts, this is a time-boxed writing activity. I’ve got about 60 mins, so let’s see where we get to.

Back to it.

Today’s focus is uncertainty and doing more, faster.

These values draw from James Brusseau’s follow up Medium post. Collectively they describe the values and attitudes behind the Acceleration AI Ethics proposal.

Uncertainty is encouraging. The fact that we do not know where generative or linguistic AI will lead is not perceived as a warning or threat so much as an inspiration. More broadly, the unknown itself is understood as potential more than risk. This does not mean potential for something, it is not that advancing into the unknown might yield desirable outcomes. Instead, it is advancing into uncertainty in its purity that attracts, as though the unknown is magnetic. It follows that AI models are developed for the same reason that digital-nomads travel: because we do not know how we will change and be changed.

Innovation is intrinsically valuable. A link forms between innovation in the technical sense and creation in the artistic sense: like art, technical innovation is worth doing and having independently of the context surrounding its emergence. Then, because innovation resembles artistic creativity in holding value before considering social implications, the ethical burden tips even before it is possible to ask where the burden lies. Since there exists the positive value of innovation even before it is possible to ask whether the innovation will lead to benefits or harms, engineers no longer need to justify starting their models, instead, others need to demonstrate reasons for stopping.

The only way out is through: more, faster. When problems arise, the response is not to slow AI and veer away, it is straight on until emerging on the other side. Accelerating AI resolves the difficulties AI previously created. So, if safety fears emerge around generative AI advances like deepfakes, the response is not to limit the AI, but to increase its capabilities to detect, reveal, and warn of deepfakes. (Philosophers call this an excess economy, one where expenditure does not deplete, but increases the capacity for production. Like a brainstormed idea facilitates still more and better brainstorming and ideas, so too AI innovation does not just perpetuate itself, it naturally accelerates.

Decentralization. There are overarching permissions and restrictions governing AI, but they derive from the broad community of users and their uses, instead of representing a select group’s preemptory judgments. This distribution of responsibility gains significance as technological advances come too quickly for their corresponding risks to be foreseen. Oncoming unknown unknowns will require users to flag and respond, instead of depending on experts to predict and remedy.

Embedding. Ethicists work inside AI and with engineers to pose questions about human values, instead of remaining outside development and emitting restrictions. Critically, ethicists team with engineers to cite and address problems as part of the same creative process generating advances. Ethical dilemmas are flagged and engineers proceed directly to resolution design: there is no need to pause or stop innovation, only redirect it.

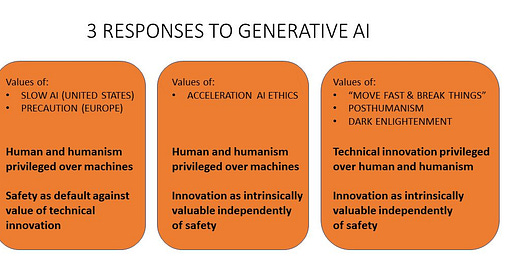

Before continuing, let me highlight an image James posted on LinkedIn. This was, as per the previous post, in response to the questions from Evan Selinger.

What we now have is a clearer, albeit simplified view, of where the acceleration proposal sits along the spectrum from “do nothing, it’s just too scary”, to “f$#k it, cat’s out of the bag folks Let’s just see where this shit lands.”

Although there are certainly warring factions in this space, which isn’t actually new, it does seem that there is overwhelming support for slowing down and precaution. I can understand, based on many of the variables at play, why this is preferred by the majority.

One very clear reason I think this might make sense is that ethics washing in a general sense is utterly rife. Corporations rarely, as per the Sutcliffe et al. model of trustworthiness I so often use, demonstrate they are worthy of trust (I can expand on this for days. No time right now). This, in many ways, is captured rather well by Sull et al. as they explore that wide gap between what corporations state they value and how they seem to behave.

I’ve had very deep exposure to dozens of organisations throughout my career, from federal government departments through to major corporations, startups, research and policy institutes, VCs and other types of structures like social enterprises or customer owned organisations (mutuals). In almost all case, the ethical intent-action gap is huge.

Even based on this, and the justifiable scepticism that may arise from corporate control of generative AI, there is a darn good case (no claim as to the probability of this happening, or of its efficacy if it does) for slowing down, at least in many contexts.

Anyways, I’m going to come back to this more over the coming weeks and months. There’s so much to work through. I hope that more of us create the space to do with with (com)passion, curiosity, humility and a real desire to learn and apply.

Uncertainty is encouraging

Okay, where do we even begin…? As a general observation about life I’d say very few folks walk around radically accepting uncertainty. Through most of what we do we seek to command and control the world around us.

Give this, and without the time to qualify it further, I’d say there’s a beauty to what Jasmes is suggesting. But just because there’s a beauty to the perspective, given the possibilities a fairly radical perspective like this might enable, doesn’t mean it’s good and right.

Whether this is good and right really depends on:

The normative theory we draw from (deontology/teleology, virtue ethics, consequentialism etc. I’m certainly not a meta ethicist, but there’s plenty here to discuss also IMO)

Specific ethical frameworks we might use (i.e Principlism, which seems very popular in the AI Ethics space)

The ways in which those theories / frameworks are applied in specific contexts (to the development of a specific model)

The judgement that people place on the intent behind a given project / model (in most of law and life we seem to care deeply about the intent of the action, not just the consequences. I recognise some folks argue that literally everything is just consequences. I’ll leave that aside for now)

The judgement that people place on the outcomes of the model (from the very best to the very worst) and how they map to what we value, what we believe to be right, the problems we feel are truly worth solving etc.

Pick your poison. We could add plenty more to this list

I recognise the list above is according the the typical upfront / technocratic(ish) / deliberative process of moral analysis that is rarely integrated with / embedded into the practical workflows of product / service / model development.

Based on what James is suggesting, the value here is somewhat in the inherent beauty of the journey (its uncertainty, variance, what emerges from this etc.). How this is factored into, weighted against etc. the ‘traditional’ ways we think about this stuff is presently unclear to me.

Given my time box, I’m moving on. But let’s work through this via different channels. Share with me your perspective on the proposal that uncertainty, in the context James has describes it, ought to be valued (and as a result, helps make the case for acceleration).

More, faster

The only way around the wall is through it.

Joking.

But seriously, let’s explore this a little. In this value / attitude, James proposes that the answer to issues caused by (or enabled / accelerated / amplified) AI is more AI. Is this a bit like saying we can solve the climate / ecological / water / (everything within the biosphere) crisis with more fossil fuels, more extraction, more exploitation and more growthism? I don’t think so, but a lot of folks I’ve talked to about this have near immediately reacted in this way.

Where I’m presently struggling with this (recognising the limited time I’ve given it thus far) is that that consequences of getting stuff wrong, the runaway effects etc. could be quite significant. Quite significant indeed. I’m not sure whether it’s naive to think that this could work. I’m not sure what historical evidence James is drawing. I’m not sure how much this sounds to me like sunk cost fallacy.

On the flip side, I’m asking myself about how well ‘hard’ regulation of stuff like this has worked in the past. A mixed bag for sure. I’m also pondering whether, given the momentum, it’s even possible to slow down. And if it’s not possible, surely Acceleration is a far better option than the furthers right of the spectrum above that moves fast, breaks shit and leaves a lot of collateral damage in its path.

Then there’s the obvious bit about the ethical intent-action gap. We’ve had AI Ethics Principles for a while. They’re basically all the same, as is highlighted by the analysis from the AI Ethics Lab I reference above. And even though this is the case, my experience is that, generally speaking, this stuff has limited impact on operational functions with real-world organisation’s. Could a well thought out operational approach to Acceleration AI Ethics help close some of the ethical intent-action gap? Might this sort of approach lead to more positive behaviour change (frankly, this is where a lot of this stuff falls over from the get go. If you’re a behavioural scientist, I’d argue this is an opportunity for you. Happy to chat and share more detail here)?

All of this is, you guessed it, uncertain.

What I am (within reason) ‘certain’ of, is that we need to stop the warring bull shit. We face a poly crisis folks. Life’s a big deal. It’s bloody brilliant in a million ways (but it’s also hard, brutal, devastating etc. I get all that. No time for further caveats). It’s worth protecting, embracing and radically improving. We need to realise that we are literally and figuratively in this together. There is more that connects us than divides us.

I feel pretty confident that a more compassionate, open, curious and humble approach - grounded in some serious rigour - can help us move in a direction where generative AI is far more positive than negative. This is pure speculation of course, but without hope, what the fuck is the point?

With love. That’s my time-box done for today.